The Biological Limits of Truth: Why Human Neurology Is Failing to Detect Sophisticated AI Deepfakes and Synthetic Media

The boundary between digital artifice and physical reality has reached a point of near-total erosion, leaving the human mind increasingly unable to distinguish between genuine captures of life and the synthetic outputs of high-fidelity artificial intelligence. This phenomenon, which sociologists and cognitive scientists have termed "epistemic collapse," represents more than a technological hurdle; it is a fundamental crisis of social trust and shared reality. As AI tools become more efficient and accessible, the mechanisms that once allowed humans to verify information, form reliable memories, and participate in democratic processes are being systematically undermined by content that bypasses the brain’s natural defense systems.

The Neurological Vulnerability: Why the Brain is "Hacked" by AI

Recent breakthroughs in neuroscience have begun to explain why humans are so susceptible to deepfakes. Our biological hardware, shaped by millions of years of evolution, was designed to process stimuli from the natural world—a world where seeing was, for all intents and purposes, believing. Scientific studies utilizing functional Magnetic Resonance Imaging (fMRI) and electroencephalography (EEG), published between 2023 and 2024, have pinpointed the exact neurological pathways that AI exploits.

The primary site of this vulnerability is the fusiform gyrus, a critical region located at the junction of the temporal and occipital lobes. This area is responsible for high-level visual processing, specifically the recognition of faces and complex objects. Research published in the journal Neuron in 2023 revealed that the fusiform gyrus is activated both during the perception of real-world stimuli and during the act of imagination. This region generates what researchers call a "reality signal," which is then transmitted to the medial prefrontal cortex.

Under normal circumstances, the medial prefrontal cortex acts as a gatekeeper, evaluating the reality signal to determine if an image is real or a fabrication. However, high-quality synthetic media—created by Generative Adversarial Networks (GANs) or sophisticated diffusion models—is now so precise that it triggers a reality signal indistinguishable from that of a real photograph. By effectively "hacking" the fusiform gyrus, AI-generated content causes the brain to misclassify artificial stimuli as authentic. Because humans have no biological defense against media specifically engineered to deceive the visual cortex, the prefrontal cortex is often bypassed entirely, leading to the formation of false memories.

A Chronology of the Synthetic Media Revolution

The path to epistemic collapse has been remarkably swift, moving from niche laboratory experiments to global social phenomena in less than a decade. Understanding this timeline is essential for grasping the scale of the current challenge.

- 2014–2017: The Emergence of GANs. The invention of Generative Adversarial Networks by Ian Goodfellow provided the mathematical framework for AI to "learn" how to recreate realistic imagery. Early applications were crude, often appearing in the "uncanny valley," where images looked almost human but were noticeably "off."

- 2018–2020: The Rise of Deepfakes. The term "deepfake" entered the mainstream. While initially used for harmless face-swapping in films or malicious non-consensual content, the technology began to be used for political satire and misinformation.

- 2021–2022: The Democratization of Generative AI. Tools like DALL-E, Midjourney, and Stable Diffusion became publicly available. For the first time, anyone with an internet connection could generate photorealistic images from simple text prompts.

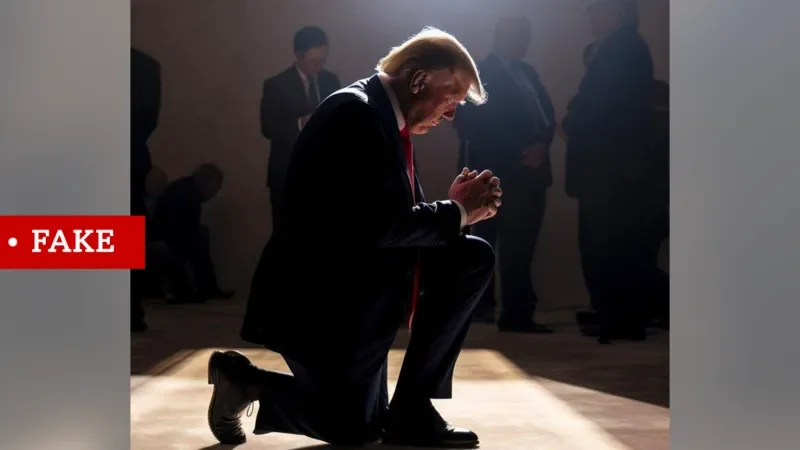

- 2023: The Viral Breakthrough. Images such as "Pope Francis in a Balenciaga puffer jacket" or AI-generated photos of former President Donald Trump being "arrested" (created by Eliot Higgins) went viral. Millions of users initially believed these images were real, marking the first global realization that visual evidence could no longer be trusted implicitly.

- 2024–Present: The Era of Hyper-Realism. The introduction of video-generation models like OpenAI’s Sora and the refinement of real-time voice cloning have made it possible to fabricate entire events with professional-grade cinematography and audio fidelity.

Supporting Data: The Illusion of Detection

While many people believe they possess a "keen eye" for spotting AI-generated content, empirical data suggests otherwise. Large-scale studies involving thousands of participants have demonstrated that human detection of synthetic media is statistically equivalent to a coin toss.

In controlled tests, the average accuracy rate for humans identifying deepfakes was found to be approximately 55.54%. This marginal lead over random chance (50%) is insufficient for any practical application, such as verifying evidence in a courtroom or validating news reports during a fast-moving political crisis. Interestingly, AI-generated videos, which are technically more complex to produce, proved even harder to detect, with accuracy rates hovering around 57.31%.

The data highlights a dangerous "confidence-competence gap." Most users believe they can spot a fake by looking for "telltale signs" like distorted fingers or unnatural lighting. However, as AI models are trained on these very flaws, those markers disappear. In a 2023 study, participants often flagged real, slightly blurred photos as "AI" while accepting high-definition synthetic images as "real." This suggests that our criteria for truth are becoming untethered from reality itself.

Societal Impacts: Democracy and the Distortion of Self

The implications of this neurological "hacking" extend far beyond individual confusion; they threaten the foundational structures of modern society.

The Erosion of the Democratic Process

In the political sphere, deepfakes introduce what experts call the "Liar’s Dividend." This occurs when the existence of deepfakes allows politicians to dismiss genuine, incriminating evidence as "AI-generated." Conversely, the rapid fabrication of scandals—such as fake audio of a candidate making inflammatory remarks on the eve of an election—can shift public opinion before fact-checkers have the opportunity to intervene. The speed of social media amplification ensures that by the time a video is debunked, the emotional and psychological impact on the electorate has already been solidified.

The Psychological Toll on Youth

The impact on younger generations is equally concerning. Adolescents today are immersed in an environment where AI-powered filters and generative tools are used to "enhance" reality. This constant exposure to unattainable standards of beauty and lifestyle creates a distorted self-image. When the brain cannot distinguish between a peer’s real face and an AI-augmented version, it leads to a permanent state of social comparison against an impossible, non-existent standard. This has been linked to rising rates of body dysmorphia and digital anxiety among Gen Z and Gen Alpha.

Official Responses and Proposed Solutions

Recognizing the severity of the threat, technologists and policymakers are calling for a multi-layered approach to regulation and product design. Janduí Jorge, a leader in AI innovation and digital products, and other industry experts argue that the solution must be structural rather than purely educational.

1. Permanent Content Labeling and Watermarking

Experts advocate for mandatory, cryptographic watermarking for all AI-generated content. This would involve embedding metadata (such as the C2PA standard) that identifies the origin of a file. While this does not stop the spread of fakes, it provides a "paper trail" for platforms and savvy users to verify the source of the media.

2. Algorithmic Auditing and Accountability

There is a growing demand for independent audits of the algorithms used by major social media platforms. These audits would ensure that recommendation engines do not prioritize high-engagement synthetic misinformation over verified, factual reporting.

3. The Introduction of "Digital Friction"

One of the most innovative proposals involves changing the user interface of social media. By introducing intentional "friction"—small pauses or prompts that ask a user to reflect before sharing content—platforms can disrupt the "fast-thinking" neurological response that leads to the viral spread of deepfakes. This encourages the prefrontal cortex to engage in more critical evaluation before an image is disseminated.

4. Legal and Regulatory Frameworks

Governments are beginning to act. The European Union’s AI Act and various executive orders in the United States are moving toward requiring transparency from AI developers. These regulations aim to hold companies liable if their tools are used to create harmful, non-labeled synthetic content at scale.

Conclusion: Reclaiming Shared Memory

The challenge posed by AI is not merely a technical glitch in our information ecosystem; it is a fundamental challenge to the human experience. At its core, a functioning democracy relies on a "shared memory"—a common understanding of what has happened and what is true. When that shared memory is fractured by synthetic realities, the social contract begins to dissolve.

To navigate this new era, society must move beyond the hope that humans can simply "learn" to see through the illusion. We must instead build a digital infrastructure that honors our biological limitations. Protecting the "reality signal" of the human brain will require a combination of legislative courage, ethical engineering, and a global commitment to the preservation of truth in an age of infinite artifice.