The AI Boom Just Hit Its Next Bottleneck

The Technological Shift: From Electricity to Light

The fundamental challenge facing modern data centers is the physical limitation of copper-based electrical signals. As AI clusters grow to include tens of thousands of GPUs, the energy required to move data between these processors via copper cables generates immense heat. This thermal output necessitates sophisticated cooling systems, which in turn consume more power, creating a cycle of inefficiency that threatens to stall the scaling of large language models (LLMs).

POET Technologies has addressed this through its proprietary Optical Interposer platform. Unlike traditional methods that rely on discrete components and manual assembly, POET’s platform allows for the integration of electronic and photonic devices into a single multi-chip module. By moving data using light (photons) rather than electricity (electrons), these optical engines offer up to 90% less power consumption and significantly higher bandwidth density.

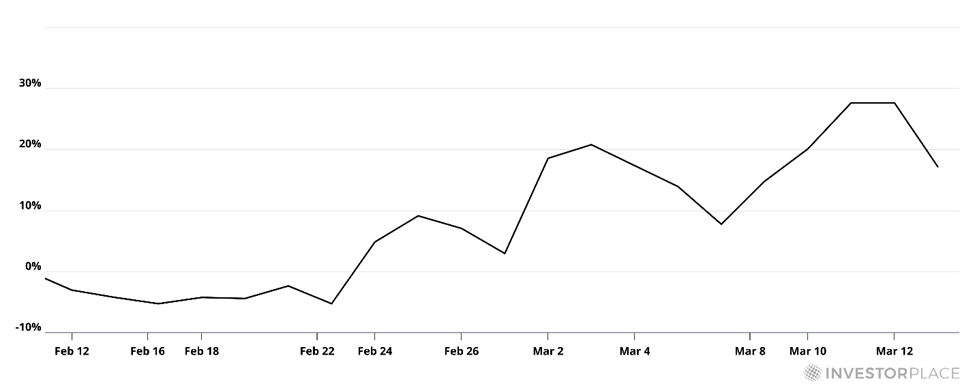

Industry validation for this approach arrived following Marvell Technology’s acquisition of Celestial AI. Because Celestial’s technology was built upon POET’s platform, the deal signaled to the broader market that optical interconnects are no longer a theoretical solution but a commercial necessity. Analysts suggest that as AI clusters move toward "scale-out" architectures—where data must travel longer distances between server racks—optical networking will become the dominant standard.

A Chronology of the AI Infrastructure Gold Rush

The current investment climate in AI can be categorized as a "rolling gold rush," a term coined by hypergrowth expert Luke Lango to describe the sequence of infrastructure bottlenecks that have emerged since late 2022. Each phase of this sequence has produced distinct market leaders as capital flows toward the companies solving the most immediate constraints.

- The Compute Phase (2022–2023): The initial bottleneck was raw processing power. This phase saw Nvidia (NVDA) rise to become one of the world’s most valuable companies as demand for H100 and B200 GPUs skyrocketed.

- The Server Build-out Phase (2023–2024): As GPUs became available, the bottleneck shifted to the assembly of these chips into massive clusters. This propelled companies like Super Micro Computer (SMCI) and Dell Technologies, which specialize in high-density server architecture.

- The Power and Cooling Phase (2024–Present): The massive energy requirements of AI clusters led to a surge in demand for liquid cooling solutions and reliable electricity. This phase highlighted the importance of utility providers and thermal management specialists.

- The Networking and Interconnect Phase (Emerging): The current bottleneck is the "network plumbing" that links GPUs. This is where optical engines and advanced cabling systems are becoming the primary focus for capital expenditure by hyperscalers like Microsoft, Amazon, and Google.

The Persistent Role of Copper in a Fiber-Optic World

While optical technology represents the future of long-distance data transmission, copper remains an indispensable component of the AI build-out. The debate between copper and fiber is not a zero-sum game; rather, it is a matter of application and distance. For "scale-up" operations—connections within a single server rack where distances are measured in centimeters—copper is still preferred due to its lower cost and high efficiency over very short ranges.

Macroeconomic analyst Eric Fry has consistently argued that the demand for copper will outstrip supply for the foreseeable future. Fry forecasts that copper prices could reach $8.00 per pound by 2026, driven by a convergence of factors including AI data center construction, the global transition to renewable energy, and the expansion of aging electrical grids.

Data from S&P Global supports this bullish outlook, projecting that global copper demand will increase by nearly 50% by 2040. However, the pipeline for new copper mines is historically thin. The International Copper Study Group (ICSG) expects the refined copper market to flip into a deficit of approximately 150,000 tonnes in the coming year. More aggressive estimates from firms like UBS suggest the deficit could reach as high as 400,000 tonnes as mine production growth fails to keep pace with industrial modernization.

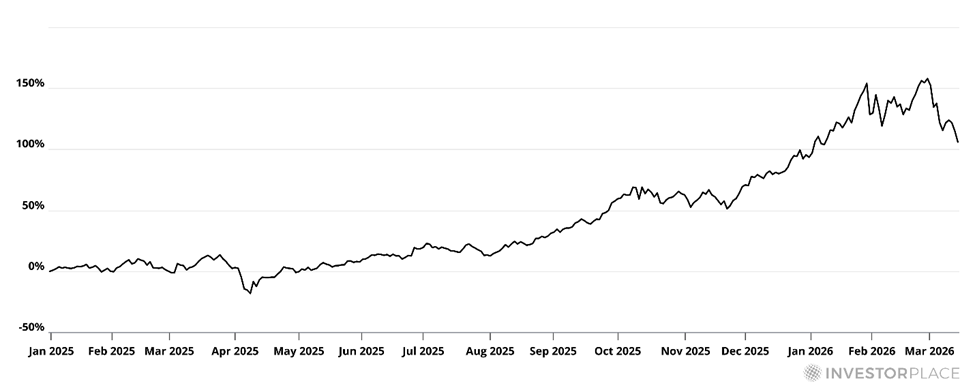

The scale of the challenge is historic. To maintain the current pace of global electrification and AI expansion, the mining industry would need to produce as much copper over the next two decades as has been mined in all of human history. This supply-demand imbalance has already led to significant gains in the Global X Copper Miners ETF (COPX), which has more than doubled in value since the start of 2025.

Supporting Data: The Next Chokepoints

As the market absorbs the networking and copper narratives, new bottlenecks are already forming in the areas of energy and memory. Market research indicates that nearly 100 gigawatts of new data center capacity are scheduled to come online globally over the next four years. However, the supply chain for High Bandwidth Memory (HBM) and Dynamic Random Access Memory (DRAM) is currently only capable of supporting roughly 15 gigawatts of that capacity over the next two years.

Nvidia CEO Jensen Huang has publicly identified the memory bottleneck as a "severe" constraint on AI progress. Without sufficient DRAM, the processors cannot store and retrieve the massive datasets required for real-time AI inference. This has led to increased interest in companies like Micron Technology and SK Hynix, which are racing to expand their fabrication facilities.

Furthermore, the physical backbone of the internet—fiber-optic cabling—is seeing a resurgence. Corning (GLW), a global leader in glass and optical fiber, has seen its stock price surge over 240% following its pivot toward AI-specific networking solutions. The company’s ability to provide the "scale-out" infrastructure needed to connect disparate data center buildings has made it a cornerstone of the AI networking trade.

Upcoming Catalysts and Market Reactions

The semiconductor and networking industries are looking toward several key events in 2026 to gauge the pace of adoption for these new technologies. The OFC 2026 conference (Optical Fiber Communication Conference and Exhibition) is expected to be a major catalyst for POET Technologies and its peers. As the flagship event for the optical networking industry, it serves as the primary venue for companies to demonstrate hardware interoperability and announce new partnerships with hyperscalers.

POET has recently fortified its balance sheet, reporting over $400 million in cash reserves, which provides the necessary runway to execute its production roadmap. Analysts believe that if the adoption of optical interposers materializes as expected among tier-one cloud providers, the company could see a "multi-bagger" return, transitioning from a specialized component designer to a high-volume manufacturer.

Broader Economic and Industrial Implications

The implications of these bottlenecks extend beyond the stock market. The struggle to secure copper, memory, and energy is forcing a geopolitical realignment of supply chains. Governments are increasingly viewing semiconductor materials and electrical infrastructure as matters of national security. This has led to the "FutureProof" movement among investors—a strategy of identifying companies that own the "chokepoints" of the modern economy.

When an industry encounters a bottleneck, the companies that provide the solution gain immense pricing power. In the first phase of the AI boom, this power belonged to the chipmakers. In the current and upcoming phases, it is shifting toward the companies that manage the physical movement of data and the underlying raw materials.

The transition from copper to optics inside data centers is a microcosm of the broader technological evolution. While copper will continue to power the world’s grids and short-range electronics, the "speed of light" is becoming the new benchmark for the AI era. Investors who recognize the sequence of these bottlenecks—from compute to networking to memory—are positioned to capitalize on the structural shifts that will define the global economy through the end of the decade. The data suggests that the AI build-out is not a singular event but a multi-year industrial overhaul that requires a massive influx of both high-tech innovation and traditional raw materials.