Google Cloud Enhances Vids Platform with Controllable AI Avatars and Veo 3.1 Integration for Expanded User Accessibility

Google Cloud has officially unveiled a significant suite of updates for Vids, its AI-powered video creation application integrated within the Google Workspace ecosystem, aimed at bridging the gap between professional-grade production and accessible content creation. This latest rollout introduces sophisticated control mechanisms for digital avatars, integrates the high-performance Veo 3.1 Lite video generation model, and establishes a direct pipeline to YouTube, marking a pivotal shift in how the company positions generative video tools for both enterprise and personal use. By democratizing access to these features, Google is effectively lowering the barrier to entry for high-quality video storytelling, which has historically required expensive software, specialized hardware, and extensive technical training.

Advanced Control Mechanisms for Digital Avatars

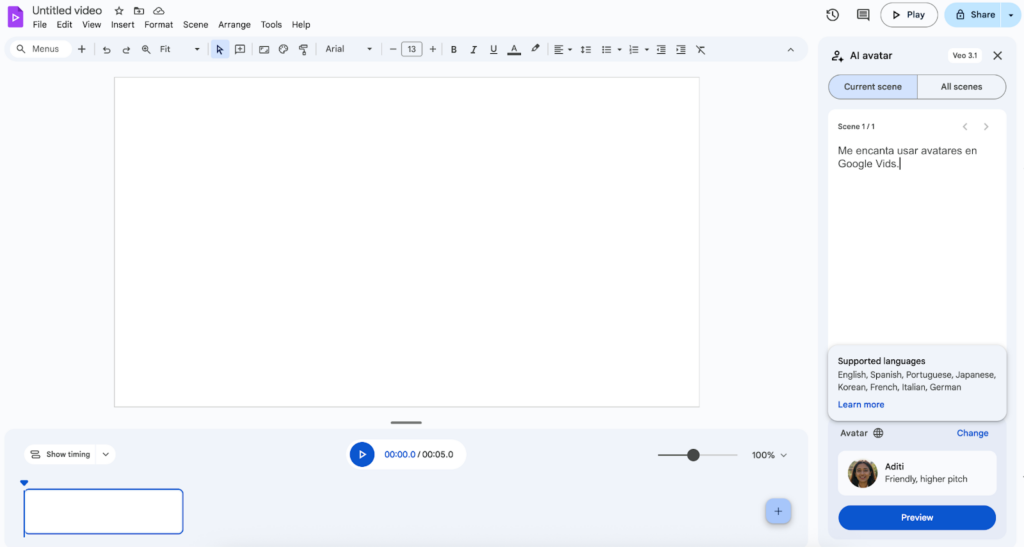

At the forefront of this update is the introduction of controllable avatars, a feature designed to solve one of the most persistent challenges in AI-generated video: spatial and interactional consistency. Users are now empowered to insert digital avatars into specific scenes and direct them to interact with uploaded objects, such as a company’s new product, a piece of medical equipment, or a physical prop. This functionality is driven by simple text commands, allowing creators to dictate movements and interactions without needing to manipulate complex animation keyframes.

The technical sophistication of these controllable avatars lies in their ability to maintain visual and auditory consistency across multiple frames. In traditional video production, achieving a consistent look and voice requires multiple takes and meticulous editing. Within Vids, the AI ensures that the avatar’s face, clothing, and vocal characteristics remain stable throughout the generated sequence. This reliability is particularly valuable for corporate training videos, product demonstrations, and educational content where professional polish and brand continuity are essential.

Complementing the control features is the new capacity for avatar personalization. Users are no longer restricted to a library of pre-set characters; they can now generate entirely custom avatars via text prompts. This includes the ability to adjust physical appearance, change attire to suit specific professional or casual contexts, and alter background environments to match the desired tone of the video. By providing these customization layers, Google is catering to a global market where representation and localized aesthetics are critical for audience engagement.

Integration of Veo 3.1 Lite and Expanded User Access

In a strategic move to broaden its user base, Google has integrated the Veo 3.1 Lite model directly into the Vids interface. Veo is Google’s most capable generative video model to date, and its "Lite" iteration is optimized for the rapid generation of dynamic clips within a web-based environment. For the first time, this technology is being made available to users with personal Google accounts, moving beyond the initial enterprise-only restriction of the Workspace Labs program.

The Veo 3.1 Lite integration allows users to transform text prompts or uploaded images into 8-second cinematic clips. To encourage adoption, Google is offering personal account holders 10 free generations per month, providing a sandbox for individual creators to experiment with high-fidelity video synthesis. This rollout reflects a broader trend in the industry where high-end AI research is being distilled into consumer-ready tools, mirroring the trajectory of large language models like Gemini.

The inclusion of image-to-video capabilities is particularly significant. This allows users to take a static brand logo, a photograph of a physical location, or a conceptual sketch and animate it with fluid motion. This feature effectively bridges the gap between static presentations and dynamic media, allowing users to create high-impact visual assets with minimal effort.

Streamlining the Distribution Workflow: Direct YouTube Export

Efficiency in the modern digital workflow is often measured by the reduction of "friction"—the small, repetitive tasks that consume time during the creative process. Recognizing this, Google has implemented a direct export feature that allows Vids projects to be published to YouTube without the need for manual downloading and re-uploading.

This integration serves several purposes. For corporate users, it simplifies the process of hosting internal training videos on private YouTube channels or sharing public-facing marketing content. For individual creators, it streamlines the journey from ideation to publication. By keeping the entire lifecycle of the video—from AI generation and editing to hosting and distribution—within the Google ecosystem, the company is strengthening the "sticky" nature of its Workspace suite, positioning it as an all-in-one creative hub that competes directly with specialized video editing platforms and other AI competitors.

A Chronology of Innovation: Building on Recent Milestones

The updates announced this Thursday do not exist in a vacuum but represent the latest stage in a rapid development timeline for the Vids platform. Since its initial announcement at Google Cloud Next ’24, the application has undergone several iterative improvements designed to enhance its versatility.

In February 2025, Google expanded the platform’s linguistic capabilities by adding support for seven new languages: French, German, Italian, Korean, Portuguese, Spanish, and Japanese. This expansion was not merely about text-to-speech translation; it included the synchronization of avatar lip movements and cultural nuances in vocal delivery, making Vids a truly global tool for multinational corporations.

Shortly thereafter, the platform introduced "Cartoon Avatars," offering both 2D and 3D stylized characters. These were designed to provide a more universal and emotionally resonant alternative to realistic avatars, which can sometimes fall into the "uncanny valley." Cartoon avatars have proven particularly popular in educational settings and internal communications where a friendly, approachable tone is preferred over a strictly formal one.

Furthermore, the integration of the Lyria 3 model brought advanced audio capabilities to Vids. Lyria 3 allows for the generation of high-quality background music and soundscapes from text descriptions. Users can generate short 30-second clips for social media snippets or full-length tracks for long-form presentations. This ensures that the auditory quality of the content matches the visual sophistication provided by Veo 3.1 Lite.

Technical Analysis and Market Implications

The evolution of Google Vids signals a major shift in the competitive landscape of generative AI. While competitors like OpenAI with Sora or companies like Runway and Pika Labs have focused on the raw generation of video clips, Google is focusing on the "packaging" of these capabilities into a collaborative, productivity-focused application.

By embedding Vids within Google Workspace, the company is leveraging its existing infrastructure of Docs, Sheets, and Slides. A Vids project can ingest data from a Google Slide deck or a brief written in a Google Doc to automatically generate a first draft of a video. This "AI-first" approach to productivity suggests that in the near future, creating a video will be as standard a business task as drafting an email or building a spreadsheet.

From a technical perspective, the move to "controllable" avatars suggests that Google has made significant strides in diffusion-based animation. Ensuring that an AI character can interact with a user-uploaded object requires a deep understanding of spatial relationships and physics within the generated video space. This is a non-trivial achievement that places Google at the forefront of practical AI applications.

Official Responses and Industry Impact

While specific external statements from the Thursday announcement were focused on the technical deployment, industry analysts have noted that Google’s strategy is clearly aimed at the "prosumer" and enterprise markets. Unlike pure entertainment-focused AI video tools, Vids is being marketed as a solution for real-world business problems: reducing the cost of training, improving internal communication engagement, and accelerating marketing cycles.

Market data suggests that video content now accounts for over 80% of all internet traffic, and businesses are increasingly desperate for ways to produce this content at scale. The traditional barriers—high production costs and the need for specialized personnel—have created a bottleneck. Tools like Vids are designed to break this bottleneck.

The broader implications for the workforce are also being scrutinized. As AI becomes capable of producing professional-level video from simple text prompts, the role of the video editor and animator is likely to shift from manual execution to high-level creative direction. Google’s emphasis on "collaboration in real-time" within Vids suggests a future where teams work together to "prompt" a video into existence, rather than passing files back and forth between disparate editing suites.

Future Outlook for the Vids Ecosystem

As Google Cloud continues to refine its AI models, the capabilities of Vids are expected to expand further. Potential future updates may include more granular control over lighting and cinematography, deeper integration with 3D modeling tools, and even more advanced real-time collaboration features.

The current update represents a foundational step in making generative video a daily tool for millions of users. By providing 10 free generations to personal accounts and introducing the Veo 3.1 Lite model, Google is seeding the market for a new generation of creators who will grow up using AI as their primary medium for storytelling. Whether for a small business owner creating their first ad or a corporate executive preparing a global town hall, the enhancements to Google Vids underscore a future where the only limit to video production is the user’s imagination.