The Evolution of AI Infrastructure Bottlenecks and the Shift Toward Physical Resource Constraints

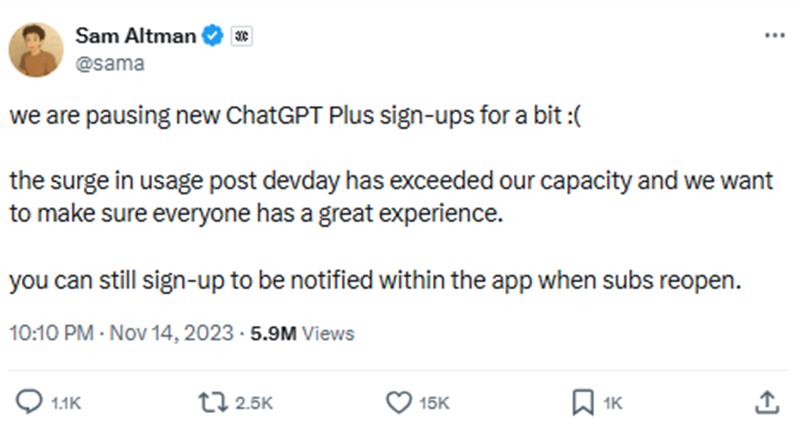

The rapid expansion of generative artificial intelligence has fundamentally altered the global economic landscape, but the trajectory of this technological boom is increasingly dictated by the physical limitations of hardware and infrastructure. In November 2023, exactly one year after the public debut of ChatGPT, OpenAI Chief Executive Officer Sam Altman took the unprecedented step of suspending new paid subscriptions for ChatGPT Plus. This decision was not a result of waning interest but rather a direct consequence of overwhelming demand that exceeded the company’s available computing capacity. This event marked the emergence of the first significant bottleneck in the AI era: the shortage of high-performance graphics processing units (GPUs), commonly referred to as "compute."

As the industry moves into 2025 and 2026, market analysts and technology experts are identifying a critical shift. While the initial constraints were defined by chip architecture and software efficiency, the next phase of AI development is running into the limitations of the physical world, specifically regarding electrical grid capacity, specialized metals, and advanced memory storage. Understanding the chronology of these bottlenecks provides a roadmap for the future of the AI economy.

The First Bottleneck: The Global Compute Shortage

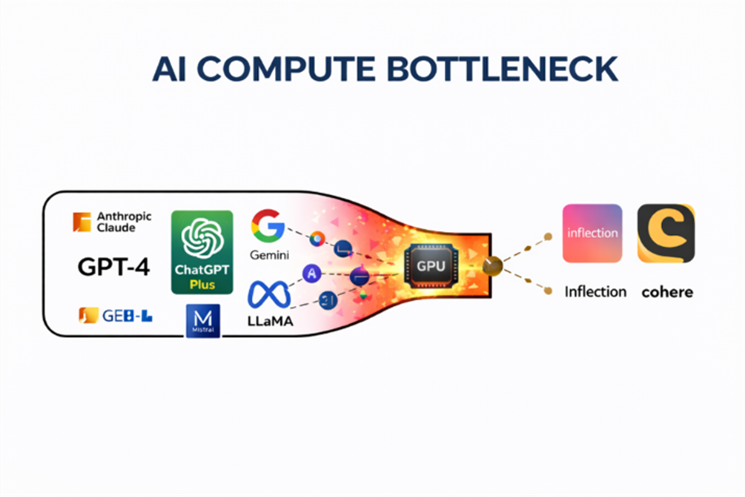

The launch of ChatGPT in late 2022 triggered an arms race among technology firms, including established "hyperscalers" like Microsoft, Alphabet, and Meta, as well as a wave of well-funded startups. To train large language models (LLMs) and run inference at scale, these organizations required thousands of specialized chips capable of handling massive parallel processing tasks.

By late 2023, the industry hit a supply-side wall. The demand for Nvidia’s H100 Tensor Core GPUs far outpaced the manufacturing capabilities of the global semiconductor supply chain. During this period, the H100 became one of the most sought-after commodities in the world. Originally priced at approximately $25,000 to $30,000, these units were reportedly being resold on secondary markets for upwards of $40,000 as companies scrambled to secure the hardware necessary to remain competitive.

Nvidia Corporation (NVDA) became the primary beneficiary of this compute bottleneck. For much of its history, Nvidia was viewed as a cyclical chipmaker focused on the video game industry. However, the pivot to AI transformed the company’s data center division into its primary revenue driver. From the launch of ChatGPT through early 2025, Nvidia’s stock price surged by nearly 1,000%, reflecting its near-monopoly on the hardware required for AI training. This growth was driven by a fundamental supply-demand imbalance: the AI models were ready for deployment, but the physical hardware to run them at scale did not exist in sufficient quantities.

The Networking and Interconnect Crisis

As companies successfully acquired GPUs, a secondary bottleneck emerged: networking. Training an advanced AI model is not a task for a single chip; it requires clusters of tens of thousands of GPUs working in perfect synchronization. If data cannot move between these chips at extraordinary speeds, the GPUs remain idle, leading to massive operational inefficiencies and wasted capital expenditure.

This interconnectivity crisis brought Broadcom Inc. (AVGO) to the forefront of the AI infrastructure trade. Broadcom specializes in the networking semiconductors—such as the Tomahawk and Jericho switch chips—that facilitate high-speed data transfer within massive data centers. Furthermore, Broadcom collaborated with major tech firms to develop custom AI accelerator chips, or Application-Specific Integrated Circuits (ASICs), tailored for specific workloads.

The market response to the networking bottleneck mirrored the surge seen in the compute sector. Since late 2022, Broadcom shares have seen gains of approximately 600%. Investors began to realize that the value of the AI boom was not just in the "brain" of the system (the GPU) but in the "nervous system" (the networking infrastructure) that allowed the components to communicate.

Chronology of Market Adjustments and the Cooling of Chip Stocks

While the initial infrastructure providers saw historic gains, recent market data suggests that the "compute-only" phase of the AI trade is reaching a point of diminishing returns. By late 2024 and early 2025, the extreme shortages of GPUs began to ease as manufacturing capacity caught up and major tech firms completed their initial massive hardware build-outs.

The performance of Advanced Micro Devices Inc. (AMD) serves as a case study for this transition. AMD was positioned as a key alternative to Nvidia, and investors who recognized the compute shortage early saw significant returns. However, after booking substantial gains in mid-2024, the stock began to face headwinds. By the end of October 2024, AMD shares saw a pullback of more than 20%, while Nvidia and Broadcom also experienced corrections of 15% and 10%, respectively.

This cooling period indicates that Wall Street is no longer pricing in infinite growth for chipmakers based solely on the initial hardware shortage. Instead, the focus is shifting toward the operational limits that prevent these chips from being utilized to their full potential.

Emerging Bottlenecks: Electricity and the Power Grid

The most significant emerging constraint for the next phase of AI is the availability of reliable, high-capacity electricity. A single query on a generative AI platform requires significantly more power than a standard Google search. As data centers grow in size and complexity, their energy requirements are beginning to strain national power grids.

According to data from the International Energy Agency (IEA), data centers, artificial intelligence, and the cryptocurrency sector could double their global electricity consumption by 2026. In the United States, utility companies are already revising their demand forecasts upward for the first time in decades. This has led to a renewed interest in nuclear energy, small modular reactors (SMRs), and advanced cooling technologies.

Without a massive expansion of electrical infrastructure, the AI boom could stall. Data center operators are increasingly finding that while they can buy the chips and the servers, they cannot secure the "interconnect agreements" with local utilities to power them. This "power bottleneck" is expected to be a primary theme in corporate earnings reports throughout 2025 and 2026.

The Critical Role of Metals and Specialized Materials

Beyond energy, the physical construction of AI infrastructure requires an immense volume of specialized metals. Copper, in particular, is essential for the high-density cabling and power distribution systems within data centers. Analysts at Goldman Sachs have estimated that AI-driven data center demand could result in an additional one million tons of copper demand by 2030.

The mining industry, however, faces long lead times for new projects, often taking a decade or more to bring a new mine online. This creates a structural deficit that could lead to price spikes in industrial metals. Furthermore, advanced memory chips (HBM or High Bandwidth Memory) require specific rare earth elements and specialized manufacturing processes that are currently in short supply. Companies that control the supply chain for these physical materials are likely to become the next "bottleneck winners" as the industry shifts its focus from digital architecture to physical construction.

Analysis of Broader Implications and Future Outlook

The transition from software-driven growth to resource-constrained growth marks a maturing of the AI sector. The "FutureProof 2026" outlook suggests that the market is entering a phase where the primary victors will not be the companies with the best algorithms, but those with the most secure access to the physical building blocks of the digital age.

Key dates for market observers include April 24, 2026, when several of the world’s largest AI hyperscalers are scheduled to report quarterly earnings. These reports are expected to provide clarity on how supply limits in electricity, metals, and memory are impacting capital expenditure and deployment timelines. If these companies acknowledge that their growth is being capped by physical shortages, it could trigger a significant rotation in the stock market, moving capital away from traditional "Big Tech" and toward utilities, mining firms, and infrastructure providers.

In summary, the AI revolution is no longer a purely virtual phenomenon. It is a massive industrial project that requires land, power, and raw materials. The initial surge in Nvidia and Broadcom shares demonstrated the value of identifying digital bottlenecks. The next phase of the boom will likely reward those who identify the constraints of the physical world. As the industry approaches 2026, the focus will remain on whether the global infrastructure can evolve quickly enough to support the insatiable appetite of artificial intelligence.