The Future of AI Infrastructure: OpenClaw, the CPU Renaissance, and the Emerging Bottlenecks of the Agentic Era

The global technology landscape is currently undergoing a fundamental transition from generative artificial intelligence, characterized by conversational chatbots, to agentic artificial intelligence, driven by autonomous software entities capable of independent execution. At the center of this shift is OpenClaw, an open-source platform designed for the development and deployment of autonomous AI "agents." Unlike traditional large language models (LLMs) that require constant human prompting to generate text or images, OpenClaw-enabled agents are designed to perform complex, multi-step tasks with minimal oversight. This evolution from "AI that talks" to "AI that does" is precipitating a massive restructuring of global computing infrastructure, moving the focus from raw processing power to sophisticated system coordination.

The Rise of the Agentic AI Paradigm

The emergence of OpenClaw signals what industry veterans, including Brian Hunt of Money & Megatrends, describe as the "Agent Supernova." This phase of the AI revolution involves the introduction of billions of "AI workers" into the global economy. These agents are programmed to manage specific roles—ranging from scheduling and email correspondence to financial transactions and cross-platform workflow coordination—without the need for continuous human intervention.

This shift is not merely an incremental software update; it represents a "before and after" moment for digital economies. While the initial AI boom focused on the "inference" and "training" of models, the agentic era focuses on "execution." When an AI agent is tasked with organizing a corporate retreat, it must interact with travel APIs, coordinate with participants’ calendars, negotiate with vendors, and manage budgets. Such tasks require a level of logic and sequential processing that differs significantly from the parallel processing tasks traditionally handled by graphics processing units (GPUs).

The Resurgence of the Central Processing Unit

For the past three years, the semiconductor narrative has been dominated by the GPU. Companies like Nvidia saw unprecedented growth as data centers scrambled to acquire the specialized chips necessary to train massive neural networks. However, as agentic systems like OpenClaw become the standard, the demands on data center architecture are pivoting.

In an agentic ecosystem, multiple AI agents must collaborate, sharing data in real-time and making sequential decisions. This requires a "conductor" to manage the traffic between various sub-systems. While GPUs remain the "engines" that run the underlying AI models, Central Processing Units (CPUs) are increasingly serving as the conductors. The CPU is optimized for serial processing and complex branching logic—the exact requirements for managing the workflows of autonomous agents.

Market analysts now project a significant "CPU Renaissance." The global server CPU market, currently valued at approximately $27 billion, is estimated to reach $60 billion by 2030. This growth is driven by the need for higher coordination capacity within AI clusters. As Eric Fry, a macro-investing expert, notes, the semiconductor industry is already witnessing the first signs of a supply-demand imbalance in this sector. Delivery lead times for high-end server processors have reportedly stretched to six months in some regions, with spot prices rising by more than 10% as data center operators realize that GPU-heavy configurations are insufficient for the next generation of agentic software.

Chronology of the AI Infrastructure Buildout

To understand the current bottleneck, it is necessary to view the AI buildout in three distinct phases:

- Phase I: The Training Era (2022–2024): Dominated by the massive acquisition of GPUs (specifically Nvidia’s H100 and A100 series) to build and train Large Language Models.

- Phase II: The Inference and Agentic Shift (2025–2026): The current phase, where the focus shifts to running models efficiently and deploying autonomous agents. This phase introduces a reliance on "Hybrid AI," where CPUs and GPUs work in closer tandem, and local processing becomes as important as cloud processing.

- Phase III: The Fully Autonomous Economy (2027 and Beyond): A period where AI agents handle the majority of administrative and logistical tasks globally, requiring a massive expansion of physical infrastructure, including energy grids and specialized materials.

Material Constraints: The Aluminum and Energy Bottleneck

The transition to agentic AI is not only a digital or silicon-based challenge; it is a physical one. The expansion of data centers to accommodate platforms like OpenClaw requires a massive influx of industrial materials. Aluminum has emerged as a critical, yet often overlooked, component of this infrastructure.

Aluminum is essential for the construction of server racks, advanced cooling systems, and power transmission infrastructure. Every megawatt of power delivered to an AI data hub requires approximately one to two tons of aluminum for high-voltage lines and internal components. As AI data centers consume an increasing share of global electricity, the demand for aluminum is projected to grow from 104 million tons in 2024 to 120 million tons by 2030.

This creates a dual-layered bottleneck. First, the physical production of aluminum is one of the most energy-intensive processes in heavy industry. Second, as AI data centers compete for the same energy resources as aluminum smelters, the cost of production for the metal is likely to rise alongside the demand for its use in those very data centers. This "circular demand" is a classic indicator of a structural supply-demand imbalance. Alcoa (AA), a leading global producer of aluminum, has already seen its stock price appreciate significantly—rising 54% since late 2025—as the market begins to price in these infrastructure constraints.

The Memory Scarcity and Corporate Performance

The "Agent Supernova" also places immense pressure on memory and storage. Autonomous agents generate and process vast amounts of contextual data to maintain "memory" across tasks. This has led to a blowout in the earnings of memory chip manufacturers.

Micron Technology (MU), a primary player in the High Bandwidth Memory (HBM) market, recently reported a tripling of revenues. However, the more significant takeaway from their executive disclosures is the inability to meet current demand. CEO Sanjay Mehrotra stated that the company is currently only able to supply between 50% and 66% of the requirements for its key customers.

The semiconductor industry is facing a reality where capacity cannot be expanded fast enough to meet the needs of the agentic era. New fabrication plants (fabs) typically take three to five years to reach full production capacity. Consequently, the "memory bottleneck" is expected to persist through at least 2026, providing a sustained tailwind for companies with existing production lines.

Industry Responses and Strategic Pivots

Major technology firms are already adjusting their hardware strategies to account for the rise of agentic AI and OpenClaw.

- Intel (INTC): Despite losing ground in the GPU race, Intel remains a dominant force in the CPU market. The company is optimizing its latest Xeon processors to support agentic workflows, focusing on "Hybrid AI" where the CPU handles the logic and coordination of multiple small models running simultaneously.

- Nvidia (NVDA): Recognizing the shift toward coordination, Nvidia has introduced the Grace CPU platform. This move signals that even the leader of the GPU era acknowledges that the future of AI requires a more balanced architecture where the CPU plays a central role in system management.

- Alcoa (AA): The aluminum giant is positioning itself to meet the "green aluminum" demands of big tech firms, who are under pressure to ensure their AI infrastructure is as carbon-neutral as possible.

Broader Impact and Economic Implications

The transition to an agent-based economy via platforms like OpenClaw represents a paradigm shift in how capital is allocated in the technology sector. In previous technological cycles, the most significant gains were often found in the "application layer"—the software companies that built products on top of new infrastructure. However, the sheer scale of the AI revolution suggests that the "infrastructure layer" may offer more sustained value.

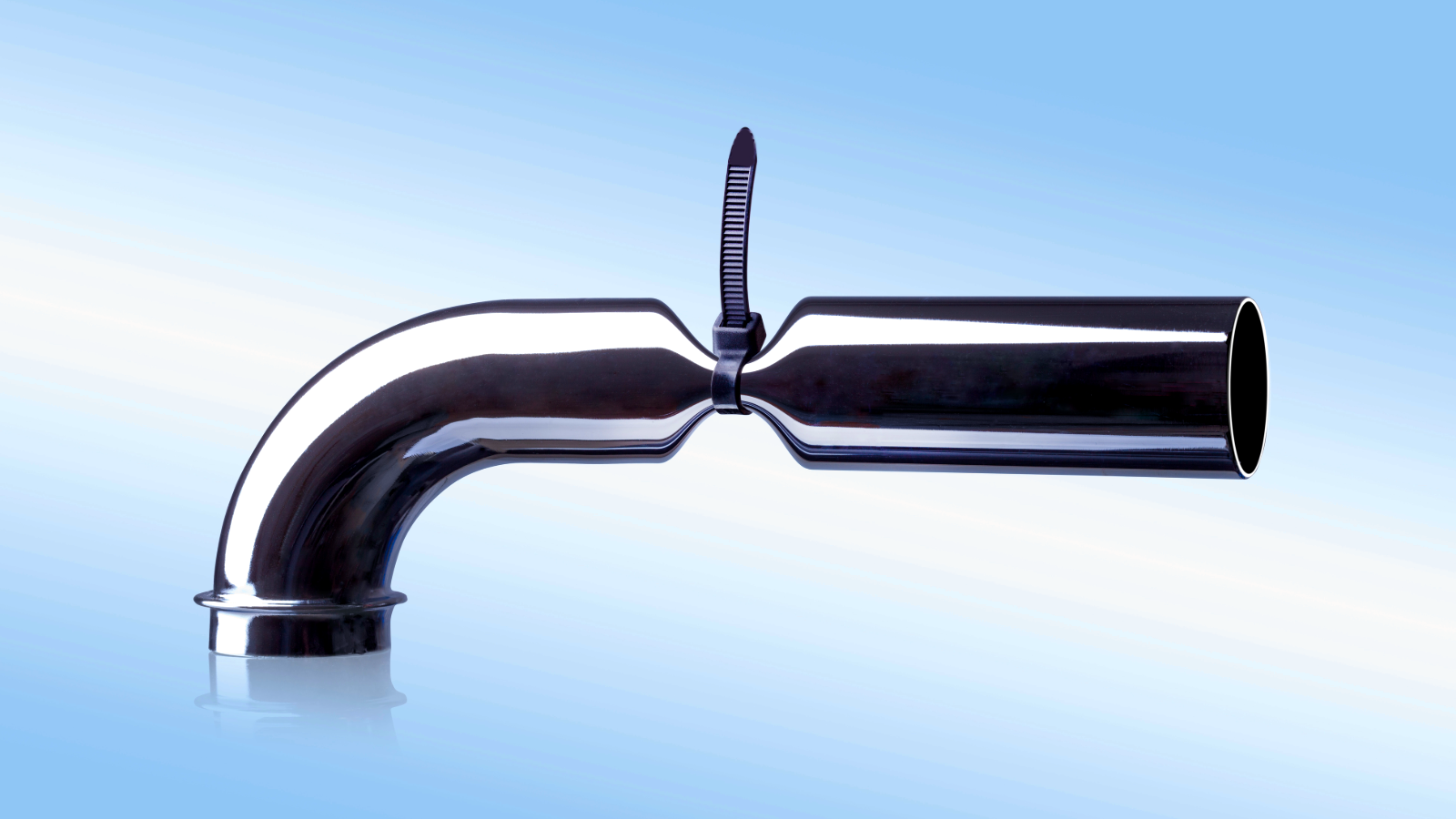

History shows that technological revolutions rarely move in a linear fashion; they move from one bottleneck to the next. In the early days of the internet, the bottleneck was bandwidth; in the early days of mobile, it was battery life and screen technology. In the era of agentic AI, the bottlenecks are coordination (CPUs), memory (HBM), and physical materials (Aluminum and Energy).

For the broader economy, the introduction of billions of "AI workers" could lead to a massive surge in productivity, but only if the underlying physical and silicon-based systems can support them. The current constraints in the CPU and memory markets suggest that the "Agent Supernova" will be a period of intense volatility as supply struggles to catch up with the rapid adoption of autonomous software.

As OpenClaw continues to gain traction, the focus of the global investment and technology communities will likely shift away from the "novelty" of AI toward the "utility" and "execution" of AI. This transition will redefine the winners and losers of the digital age, favoring those who control the essential bottlenecks of the new autonomous economy. The infrastructure buildout is no longer just about chips; it is about the entire physical and logical stack that allows an AI agent to function as a reliable participant in the global market.